RESPIRE

2018

Kıvanç Tatar, Mirjana Prpa, and Philippe Pasquier

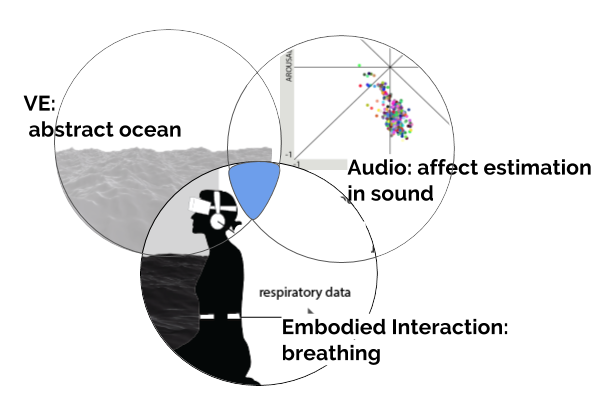

Respire is an immersive art piece that brings together three components: an immersive virtual reality (VR) environment, embodied interaction (via a breathing sensor) and a musical agent system to generate unique experiences of augmented breathing. The breathing sensor controlsthe user’s vertical elevation of the point of view under and over the virtual ocean. The frequency and patterns of breathing data guide the arousal of the musical agent, and the waviness of a virtual ocean in the environment. Respire proposes an intimate exploration of breathing through an intelligent mapping of breathing data to the parameters of visual and sonic environments.

The environment evokes a dark, gloomy atmosphere with elements like fog and waves that wrap around the user. Ambiguous visuals and a lack of focal objects stimulate the user to engage in the process of making sense of the scene. Ambiguity in the design of the interactive artifacts engages users to project their own values and experiences in the process of making meaning of the visual stimulus. Respire also exercises a minimalist color scheme to elicit the beholder’s share effect.

Publications

-> Prpa M., Tatar K., Françoise J., Riecke B., Schiphorts T., Pasquier P. (2018). Attending to Breath: Exploring How the Cues in a Virtual Environment Guide the Attention to Breath and Shape the Quality of Experience to Support Mindfulness. In Proceedings of the 2018 Designing Interactive Systems Conference (pp. 71-84). ACM Press. https://doi.org/10.1145/3196709.3196765

-> Prpa M., Tatar K., Schiphorst T., & Pasquier P. (2018). Respire: A Breath Away from the Experience in Virtual Environment. In CHI EA ’18 Extended Abstracts (ArtCHI) of the 2018 CHI Conference on Human Factors in Computing Systems (pp. 1–6). Montreal, Canada: ACM Press. https://doi.org/10.1145/3170427.3180282

Acknowledgements

This work has been supported by the Natural Sciences and Engineering Research Council of Canada, and Social Sciences and Humanities Research Council of Canada.

Ce travail est supporté par le Conseil national des sciences et de l’ingénieurie du Canada, et le Conseil national des sciences humaines et sociales du Canada.